When Boris Cherny, creator of Anthropic’s Claude Code, casually shared his development workflow on X last week, he inadvertently triggered what may be the most scrutinized thread in recent AI history. But the timing couldn’t have been more perfect—just days later, Nous Research dropped NousCoder-14B, an open-source coding model that matches larger proprietary systems despite being trained in just four days.

Key Takeaways

- Boris Cherny’s workflow revelation has Silicon Valley developers dissecting every detail of his approach to building the world’s most advanced coding agent

- Nous Research’s NousCoder-14B proves competitive AI models can be built in days, not months, using just 48 of Nvidia’s B200 GPUs

- The convergence of transparent development practices and rapid open-source innovation is reshaping how AI coding tools are built and deployed

- This signals a fundamental shift from secretive, resource-intensive AI development to community-driven, efficient model creation

The Thread That Shook Silicon Valley

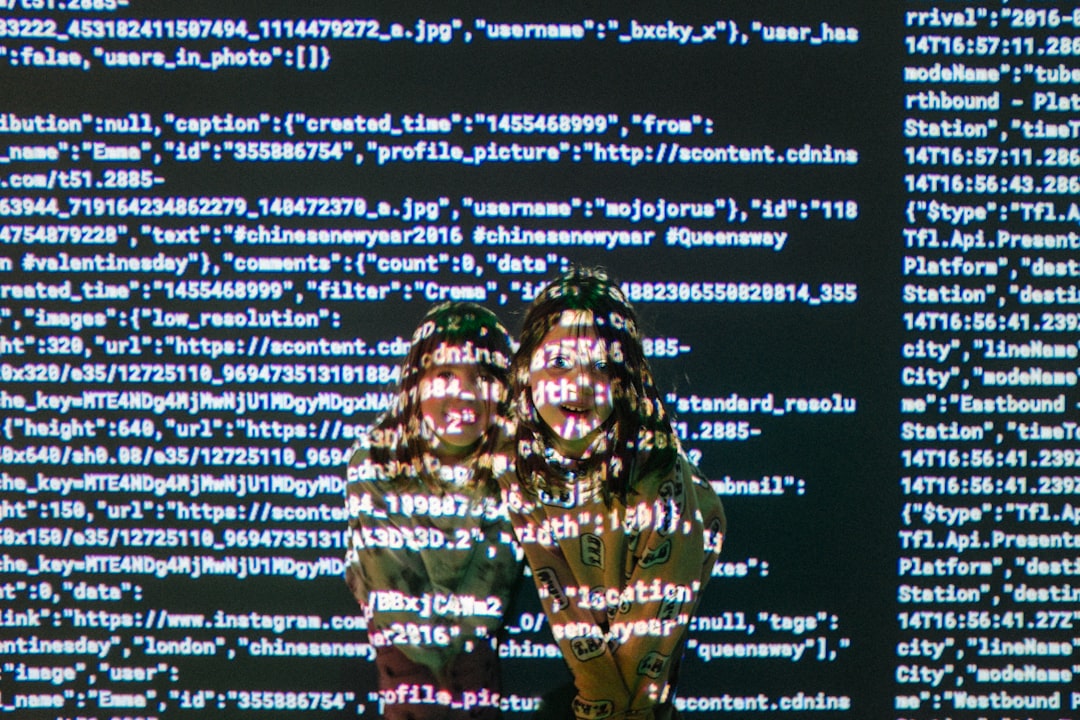

What started as a casual X post from Cherny quickly became a masterclass in AI development methodology. The engineering community didn’t just read his thread—they began reverse-engineering his entire approach to building what many consider the most sophisticated coding agent available today.

The fascination isn’t just academic curiosity. Cherny’s workflow represents a new paradigm in AI development where transparency and iterative improvement trump the traditional black-box approach that has dominated the industry. His insights into building Claude Code have become required reading for developers looking to understand how next-generation AI tools are actually constructed.

Four Days to Challenge the Giants

While developers were still processing Cherny’s revelations, Nous Research made an even bolder statement. Their NousCoder-14B model, trained using just 48 of Nvidia’s latest B200 graphics cards over four days, reportedly matches or exceeds the performance of several larger proprietary systems.

This achievement represents more than just technical prowess—it’s a direct challenge to the narrative that only well-funded tech giants can create competitive AI models. Nous Research, backed by crypto venture firm Paradigm, has proven that strategic training approaches can deliver enterprise-grade results without the massive computational overhead typically associated with such projects.

| Model | Training Time | Hardware Required | Access Model | Performance Level |

|---|---|---|---|---|

| NousCoder-14B | 4 days | 48 Nvidia B200 GPUs | Open Source | Matches larger proprietary systems |

| Traditional AI Models | Weeks to months | Thousands of GPUs | Proprietary | Variable |

The Open Source Acceleration Effect

The confluence of Cherny’s transparent development philosophy and Nous Research’s rapid deployment success suggests we’re witnessing a fundamental shift in AI development dynamics. The traditional model of secretive, resource-intensive development cycles is being challenged by community-driven approaches that prioritize speed, efficiency, and accessibility.

This trend extends beyond just coding models. As engineering teams increasingly turn to AI for real-world applications—from automotive systems to medical devices—the demand for transparent, rapidly deployable solutions is growing exponentially. The ability to understand how these systems work, as Cherny demonstrated, becomes crucial for enterprise adoption.

From GitHub Copilot to Community-Driven Innovation

The timing of these developments is particularly significant given the broader context of AI coding tool evolution. While tools like GitHub Copilot and Cursor have established the market for AI-assisted development, the Cherny-Nous Research moment represents a new chapter focused on transparency and rapid iteration.

This shift has immediate implications for enterprise development teams. The combination of understanding how leading tools are built (thanks to Cherny’s insights) and having access to competitive open-source alternatives (via NousCoder-14B) creates unprecedented opportunities for customization and deployment flexibility.

What This Means For Developers

The convergence of these events signals that the AI development landscape is entering a new phase where transparency and rapid innovation cycles will define competitive advantage. For development teams, this means access to both the methodologies behind leading tools and viable open-source alternatives that can be deployed and customized without vendor lock-in.

More importantly, it suggests that the era of AI development as an exclusive domain of tech giants may be ending. When a four-day training cycle can produce enterprise-grade results, and when the creators of leading tools openly share their approaches, the barriers to innovation drop significantly.

Looking ahead, this democratization of AI development capabilities could accelerate innovation across industries far beyond traditional tech companies. As more organizations gain access to both the knowledge and tools needed to build sophisticated AI systems, we’re likely to see an explosion of specialized applications tailored to specific industry needs—all built on the foundation of transparency and rapid iteration that this week’s events have highlighted.